How to Fix Common Audio/Video Sync Problems

In the early days of motion picture, audio sync was easy: There wasn’t any. When you’re dealing with silent films, you have plenty of room to play fast and loose with frame rates.

The first hand-cranked cameras used in the industry could shoot footage at rates anywhere from 16 to 18 frames per second; there was no standardization. When the finished silent movies were screened for audiences, they were often played back considerably faster than that, at rates over 20 frames per second.

This system allowed the studios to save money on film stock, and let the movie theaters earn more money by turning audiences over at a healthy clip.

But with the birth of the “talkies”, we quickly started to standardize our frame rates to make accommodations for audio. Throw sound into the picture, and all of a sudden people start to notice when Charlie Chaplin starts sounding like Mickey Mouse.

Video Frame Rates for Audio People

Even when sound was first added to picture, workflow remained fairly straightforward for a little while.

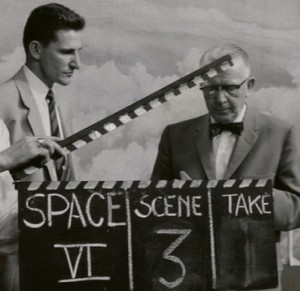

Photo courtesy of University of Houston Libraries.

In the U.S., we began to standardize the speed of film at 24 frames per second in the mid 1920s. This allowed for smooth motion capture and reliable audio sync, and it worked nicely with the 60Hz AC frequency coming out of our power outlets.

On the consumer end, movie houses figured out that they could cut down on “flicker”, by simply flashing each of these 24 frames two times a piece for a total of 48 distinct illuminations per second.

Complications began with the advent of television. 24 frames per second may have looked pretty good in the low-light of the movie theaters, but with the greater brightness of TV sets, it caused noticeable flicker.

To combat this, 30 frames per second quickly became the U.S. standard for black-and-white video broadcast. On top of that, “interlacing”, a method of drawing each frame twice, was used to achieve a full 60 “fields” of illumination per second and cut down on flicker even further.

Converting to this new format wasn’t terribly difficult. A process called “Telecine Transfer” was invented for the U.S. market. The 24 frames of a film could be converted to 30 frames of video through “2:3 pulldown”; The frames would alternately be drawn either 2 or 3 times each – effectively stretching 4 frames of film across 5 frames of video.

Europe however, came to settle on a standard of 25fps, which made good sense for their AC frequency of 50Hz. They ended up sticking with this frame rate for both film and video, even if their TVs exhibited a bit more flicker early on.

Since the Europeans adopted a single frame rate for both formats, no conversion was necessary for their own domestic productions. But when American films were shown on TV in Europe, the stations would simply speed them up by about 4%.

This leads us to Common Sync Problem #1: Audible Pitch Shift.

Today, digital technology now allows us to speed up sound without increasing its pitch. Although this is supposed to be part of the contemporary U.S.-Europe conversion process, it’s not always done as it should be.

If you’re dealing with audio that has been converted from one of these film frame rates to the other, keep an ear open for audible pitch shift. It happens less often now than in the past, but it’s still worth listening for.

At 4% a change in pitch can be significantly noticeable in a way that a change in motion is not. This becomes even more apparent in cases where the internal camera sound is properly pitch-shifted but externally recorded audio is not.

Keep in mind that this 4% pitch drift can occur in situations where sound and picture appear to be in proper sync. Test tones, like the conventional 1kHz “2 pop” can help you evaluate sync and pitch on completed projects. When dealing with raw footage, particularly on smaller-budget foreign-market projects, you may want to refer back to the original unconverted rate, and adjust pitch if needed.

The Coming of Color

Things really started to get complicated when color entered the picture. In the U.S., the 30fps video standard just didn’t leave enough extra bandwidth to include color information along with the picture.

The solution was almost brilliantly simple: slow down the video frame rate by 0.1%. No one would notice the difference in picture or in pitch, but this new frame rate of 29.97 would free up enough bandwidth to include color in the broadcast.

Ironically, it’s the brilliant simplicity and transparent fidelity of this solution that leads to so many of our synchronization headaches today.

And that’s what leads us to Common Sync Problem #2: Sound Drifts Against Picture.

Issues can arise when a camera’s picture and internal sound are slowed down by 0.1%, but multi-track sound that’s recorded on a separate device is left to play at its original speed.

Any difference in pitch that may result should be slight enough as to escape detection. You might not even notice any synchronization issues within the first couple of minutes either. But over time, the sound-to-picture drift will become glaringly unmistakable.

By the time you’re several minutes in, the slowed-down picture will have drifted until it’s a few frames late against your external sound. After 10 minutes, you’ll be off by almost 20 frames. And once you get past a half-hour or so, the sound can be off by as much as a few seconds, making for a thoroughly unwatchable program.

Look out for this issue whenever you’re dealing with footage that’s shot on a high-quality camera capable of shooting at true 24 fps. If there is externally recorded sound associated with this frame rate, it’s possible that someone along the line will forget to slow it down when the picture is telecined and then “pulled down” to a frame rate of 29.97.

Although rare, the opposite can also be a problem: Many home and prosumer cameras don’t shoot at true 24fps. Instead, they might shoot at 23.976. This can actually be a good thing, because the filmmakers who use these kinds of cameras don’t have to remember to tell anyone to slow down any external sound by 0.1%.

However, if a sound person is told the film is being shot at “24 fps” when it’s really 23.976, unnecessary speed adjustment may be made somewhere down the line.

Sample Rates for Video

In the music world, 44.1kHz has long been the standard sampling rate. Blind tests suggest that with proper anti-aliasing filters and good converters, there is little-to-no benefit to using 48kHz sampling rates for music recording. Adopting a slightly lower 44.1kHz standard did, however, allow artists to fit more music on a single disc, and let multitrack DAWs handle more processing with fewer hiccups.

In video, the standard sample rate instead settled at 48khz. As mentioned, this had little to do with sound quality. The common argument for this format is that 48,000 is evenly and easily divisible by 24 (duh), as well as 25 and 30. No such luck with 44,100, which is compatible enough with video rates, but doesn’t play nice with the number 24 at all.

To complicate matters, you may very occasionally see sample rates of 47,952 Hz or 48,048 Hz. That’s 48,000 Hz plus or minus 0.1%. Some cameras shoot at 24 fps with a 48.048 kHz sampling rate, so that the result of a 0.1% audio/video pulldown is a 48,000 Hz file at the correct speed.

This leads us to Common Sync Problem #3: Mismatched Sample Rates

If your editing program expects a certain sample rate and you feed it a different one, it may know enough to convert things for you. Many of today’s programs are better than ever at sorting through this kind of thing. But not all software is created equal, and even with the best programs, files can sometimes get mis-labeled and glitches can occur.

Whatever your source and target sample rates are, the number one thing to do here is to make sure that they are consistent.

If you do have sync issues, check to see what sample rate your editing software is set to expect for your project. Then, make sure you’ve been feeding it files stamped at that rate. If a 44.1kHz file is being read as 48kHz without being properly converted, it will end up playing back significantly faster than intended. Similar issues can occur with the less common “pull up” and “pull down” rates of 47.952 and 48.048.

Other Simple Issues

One other common sync problem has to do with time code display rather than sound drift.

It’s fairly rare but completely possible to have audio and video that have been stamped with two different sets of time code: one with “Drop Frame” and the other with “Non-Drop.” Although they may stay in visual sync when played from the beginning, the time code readings will be another story.

The reason for these two types of is that in live broadcasts, it’s essential for the time code display to stay close to actual clock time out in the real world. But when we’re dealing with a pulled-down frame rate of 29.97, our time code is running slightly slow compared to a real world clock on the wall. Over the course of one hour, traditional non-drop time code will be behind a real world clock by about 3.6 seconds (or 0.1%).

Omitting or “dropping” a frame number or two every once-in-a-while keeps time code in reasonable sync with real world clocks. So if you’re ever in a situation where the sound fits but the numbers don’t, that may be why.

Generally, you’ll be avoiding drop-frame operation unless you are told specifically to use it. It’s most common in live broadcast scenarios, and in my experience, you’re unlikely to see it used intentionally elsewhere.

Lastly, there’s a common non-synchronization issue to look for whenever you’re dealing entirely with in-camera sound. When producing talking head videos, it’s become something of a habit for location recordists to capture boom mic sound on one channel of the video tape, and sound from a lavalier mic on the other.

Whenever this is done, please do me a favor and make sure to pan these two signals to the center on mixdown. If there’s one sure mark of a student project, it’s hearing two composite audio sources unintentionally panned hard left and right.

It might not cause issues as glaring as a forgotten audio pull down, but just like all the simple synchronization issues on this list, it’s too easily avoided to be quite as common as it is. A little awareness can save a lot of time and energy.

Justin Colletti is a Brooklyn-based audio engineer, college professor, and journalist. He is a regular contributor to SonicScoop and edits the music blog Trust Me, I’m A Scientist.

Please note: When you buy products through links on this page, we may earn an affiliate commission.

Jonathan S. Abrams

January 5, 2013 at 7:29 pm (11 years ago)Regarding The Coming of Color:

The difference between the Black and White 30 fps and Color 29.97 fps (ignoring non-drop and drop frame for the moment) was deemed necessary to maintain compatibility with black and white televisions when color broadcasts were transmitted.

The argument when color was developed was that the frequency of the color

subcarrier would create beating with the sound subcarrier that would be visible on some black and white television sets. The sound carrier, however, is frequency modulated. Therefore, beating would have only occurred at a specific frequency. A GE engineer determined that if the frame rate was dropped by .1% (from 30 to 29.97), that the beating would be reduced, and compatibility would be maintained.

As a result of this change, 60Hz AC cannot leak into a video signal, or bars appear to roll through the picture every 17 seconds. Technicians I have worked with over the years describe this phenomenon as a video ground hum.

The equation that has driven audio and video engineers mad by creating this

non-whole number for video sync is: [(number of scanning lines per frame•frames per second)/2]•455=color subcarrier frequency.

When the appropriate numbers are inserted, it becomes: [(525•29.97)/2]•455 –> (15,734.25/2)•455 –> 7,867.125•455 –> 3,579,542

The NTSC adopted this equation, and could not change the lines per frame (or all TV sets would be obsolete), so they changed the frame rate. The idea behind the number 455 is frequency interleaving of the video and color signals, which would minimize interference between brightness (luminance) and color (chrominance) data. The number 455 produces a result that is an even number of half the line rate.

Maintaining compatibility with some black and white sets when audio at a specific frequency was transmitted has created synchronization headaches ever since color video was introduced.

Regarding Sample Rates for CDs:

The sampling rate of 44.1kHz was chosen for CDs because the number of used lines in an NTSC picture frame will divide evenly into 44,100. The total line count in NTSC is 525, and 35 of them are blank. That leaves 490 lines for the picture. 44,100/30 yields 1470 samples per frame. With 490 lines per frame, the samples per line is 1470/490, or 3.

Regarding Timecode:

In NTSC black and white timecode (30fps), the total number of frames per hour is 108,000 (30fps•60sec•60min). When the frame rate is reduced to 29.97 for NTSC color, there are .03 fewer fps. This causes the time being displayed on a timecode reader to be slightly slower than realtime. The math is (30-29.97)•60sec•60min=108 frames.

To make the 29.97 fps timecode match elapsed time, two (2) frames are dropped at every minute that does not contain a zero (00,10,20,30,40,50). The remaining number of minutes (54) are each missing two frames from the count, and 54•2=108, which compensates for the difference. Many readers and generators indicate drop frame timecode by using semicolons instead of colons to separate the hours, minutes, seconds, and frames numbers.

Most of this information is part of a larger paper I wrote, which is available at https://files.nyu.edu/jsa226/public/timecode.pdf.

mp4guy

February 25, 2014 at 10:27 am (10 years ago)Great post of audio video sync problems..

If you wanna fix it after encocoding

Here are some ways to fix it

http://newbrotricks.blogspot.in/2014/02/blog-post_3092.html or

http://lifehacker.com/5910943/fix-out+of+sync-audio-in-vlc-with-a-keyboard-shortcut

Ryan Petrus

October 7, 2014 at 3:32 pm (10 years ago)For sync up multiple camera angles, I’d suggest trying out PluralEyes (http://pluraleyes.com).

And if you’re just shooting on 1 camera, check out DreamSync (http://dreamsyncapp.com). It’s not as cumbersome or expensive as PluralEyes and gets the job done for smaller quick projects.

There’s an app called DreamSync, a standalone application that’s built for the novice user as well as professionals. It syncs your footage and audio into one single clip so that it can then be imported into applications like iMovie, Windows Movie Maker, Adobe Premiere, Final Cut X, or any other editing suite.

http://dreamsyncapp.com

Both apps are effective depending on your editing workflow and how much (or little) time you want to dedicate to learning another interface for syncing audio/video footage.

Scritti Politti

February 5, 2015 at 4:42 am (9 years ago)Interlacing was not done to reduce flicker. It was because they didn’t think a raster could draw an entire frame fast enough. That turned out to be wrong, but here we are with our new digital “advanced” TV system still dealing with this pathetic hack.

Not to mention the bullshit non-integer frame rates.

keyboardes

January 20, 2016 at 7:11 am (8 years ago)Avdshare

Video Converter will take

change MP4 file frame rate as an example and it can also serve to change AVCHD,

MTS, M2TS, MXF, XAVC, ProRes, MPG, AVI, FLV, MOV, WMV, MKV and almost all video

format frame rates

Gerald Ncube

April 8, 2017 at 5:18 pm (7 years ago)Hi

I am having a very stressful problem, I am making a music video and my problem is when I shoot the video I use a cd playing it with a cd player then capture that sound together with the video footage, And when I am editing I then use the camera sound and sync it to the original cd sound but my problem is it sync and match in the begging of the song but as it goes the video becomes faster than the original cd sound, how do I fix that please help. I did other songs they all fine but now I cant just get it right.

Gerald